The state of AI Computer Use

Let's talk about computer use, a key technology powering a wave of new startups, including ours.

What: Popularized by Anthropic in late 2024, computer use lets language models control a computer much like a human would. At its core is a sampling loop:

- 1. Agent is given a task (e.g. "book a flight")

- 2. Take a screenshot of the UI

- 3. Decide on the next action (e.g. click, type)

- 4. Take that action

- 5. Repeat until the task is done

Why? Not every system has an API for ordinary LLM tool calling, and not every company wants to expose one. But nearly everything can be done through a UI. Computer use opens up an entirely new action space for LLMs: anything a human can do on a computer, an agent can learn to do too. The next step is "robot use", giving agents hands and eyes in the physical world.

Who: Anthropic and OpenAI have both released computer use APIs, however there are numerous open source implementations and the top-performing agents from the OS World benchmark are multi-agent designs with a mix of open and closed source models.

Tech: there are different approaches under the hood, from pixel-space models to DOM-based tagging systems. I'll dive into those tradeoffs in a later post.

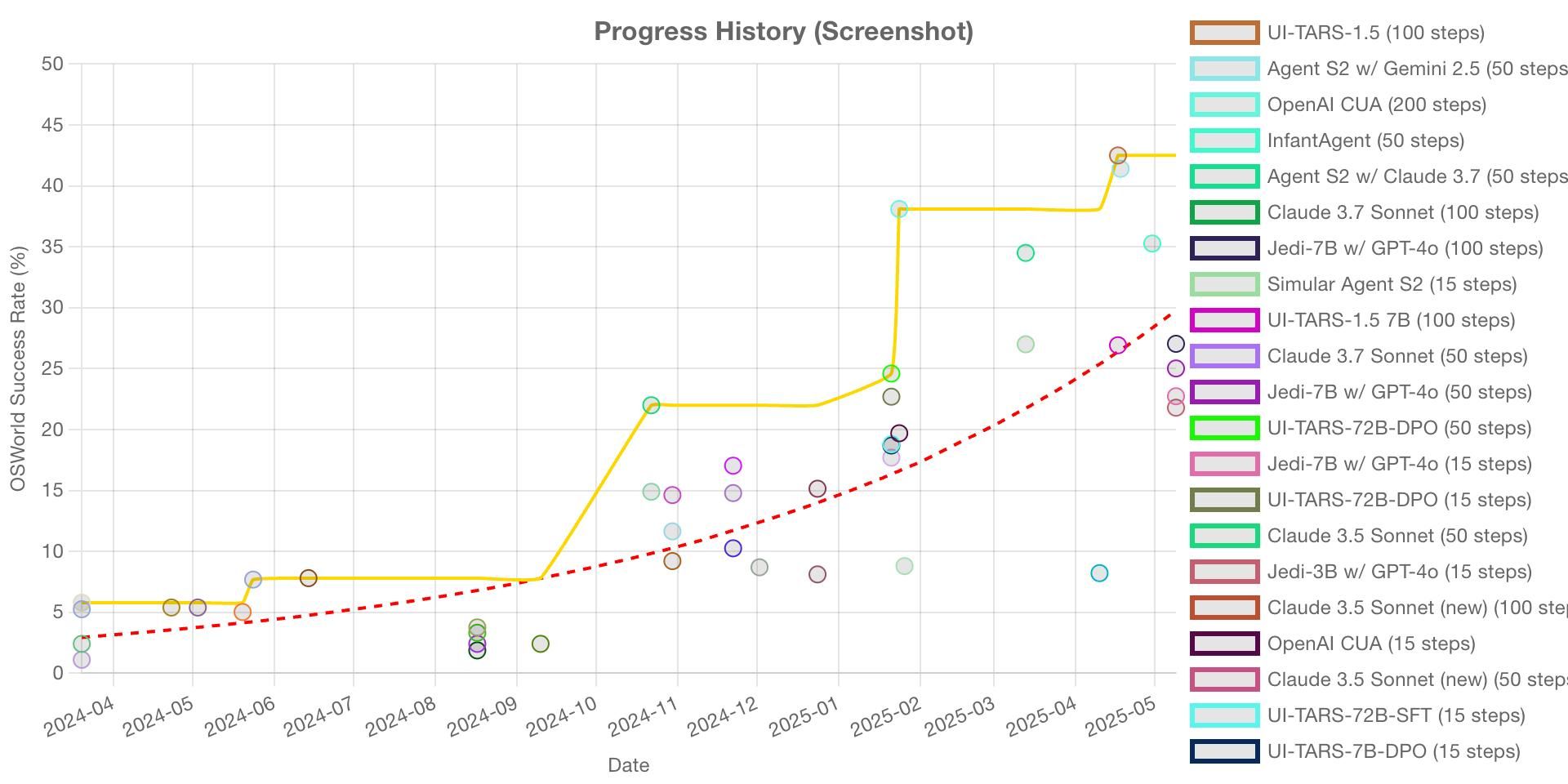

What's next: Agents still struggle with latency, planning, and error recovery. Many researchers, model companies, and startups like ours are working in this space to push the frontier. Below is the progress in the OS World Benchmark (https://os-world.github.io). We expect these models to get incredibly powerful very soon.

At QualGent (YC X25), we're leveraging computer use technology to create AI agents that can test mobile applications just like real users would - navigating through interfaces, detecting issues, and providing actionable insights. This represents the future of mobile QA in an AI-native world.

Learn more about how we're applying computer use to mobile testing 👉 https://qualgent.ai/